-

Posts

15 -

Joined

-

Last visited

Profile Information

-

Occupation

Cinematographer

-

Simulating Daylight from a dark set

Noel Sterrett replied to Hywel Phillips's topic in Lighting for Film & Video

I'm guessing outside the rehersal studio for "Smash"? If so, it played quite well. Cheers. -

Tebbe, Any chance you could email me the UP test shots? Compressed would be fine. If so: info@admitonepictures.com Thanks.

-

The "optimum" workflow is an open question, and the answer is clearly not the same for everyone. The combinations and permutations stretch into the thousands. Arguments regarding the best approach will be endless. All multifunction programs I know of will first de-Bayer to RGB. At that point, I would downsample to the highest resolution you can achieve without vingetting, and then crop. In the case of Super 16 lenses, since they have different coverage areas, the amount of vingetting will vary from lens to lens. If the lens covers more than 1920x1080 (most will to some extent), you can crop less. Simarly, if you're shooting 2.40:1, you may not need to crop at all. Obvious relatively low cost commercial choices which support sequential DNG files are Resolve, Lightroom, and After Effects. Resolve is free with the camera. Lightroom is the next lowest cost. After Effects is quite simply the most useful imaging program ever written. There are also a number of free solutions for the more technically inclined. I wish there was just one answer, but there isn't. Test, test, test... Cheers.

-

2.5K RAW is first de-Bayered to 2.5k RGB. Different de-Bayer algorighms, working color spaces and bit depths yield different results (e.g., Resolve is not the same as Photoshop). In any event, the resulting resolution is something less than 2.5K. After it is de-Bayered, the image can be cropped, downsampled or upsampled to any format (again with various algorithms, some better than others). Generally, downsampling increases the apparent resolution of the image, but the exact amount depends upon many factors. "Skyfall" was shot at 2.8K RAW, deBayered, and both upsampled to 4K and downsampled to 2K for theatrical distribution. I watched it in an IMAX theater and feel it was not at all soft. In any event, it was good enough to gross $1B. While the focus now seems to be only on resolution, there are a multitude of other factors (speed, DoF, color, flare, chromatic abberation, bokeh, mechanical, etc.) that are arguably as or more important than resolution in the choice of a lens. Of crucial importance for the BMCC is the lack of fast short focal length lenses for the non-electronic MFT mount. I often choose a 17mm f/2.8 for Full Frame. That translates to 7.5mm on the BMCC. Perhaps there are a few rectilinear (non-fisheye) 7.5mm T1.3 Super 35 lenses I haven't heard of. Whether or not a cropped 8mm T1.3 Zeiss Ultra 16 will turn out to be sharper or softer than, for example, a non-cropped 8-11mm f/4.5 Sigma remains to be seen. But because of the smaller format size, Super 16 lenses have always tended to be sharper than Super 35, my bet is on the Ultras. In the mean time, don't substitute anyone elses eyes for your own. As I pointed out earlier, it is quite straightforward to download a few BMCC .dng frames, resample, crop, sharpen, and see for yourself how much of a resolution penalty a crop imposes. Cheers.

-

You can rather easily see for yourself what happens when you crop. Start with a BMCC RAW image (lots of .dng samples on the net). Open with Photoshop, After Effects, Lightroom, Resolve or other program which can de-Bayer the image. For 2.2K, resize the image (in Photoshop "Image Size" and check "Resample Image") by a factor of 109% (2400/2200). For 1920x1080, resize by a factor of 125% (2400/1980). What you see is exactly what you will get when you "crop". If you see a major difference between 2.2K and 2.5K you're eyes are much better than mine. Cheers.

-

In this case, you de-Bayer from 2.5K as that is what is recorded, then crop to 2.2K. The reslolution at 2.2K will not be quite as good as from 2.5K, but not by much. Here, my guess is that the quality of the lens will likely make up for the slight loss in sample size. Cheers.

-

The question is how 2.2K with a Zeiss Ultra 16 compares with whatever else you could use at 2.5K. But since there are not a lot of fast 6mm lenses out there, it might be not much of a contest. On the other hand, you could just shoot 2.4/1 and the vingetteing will just about disappear. Cheers.

-

I'm not in the least bit confused. If, as you claim, cropping preserves resolution, then a 720x486 (standard definition) crop would have the same resolution as the full sensor (high definition). So why even bother with the extra pixels, if you can magically turn SD into HD? By your logic, a 1x1 crop (yes that's 1 pixel) would have the same resolution as the full sensor. All crops reduce resolution. There is a very good reason all pro cameras oversample and downscale -- resolution. Arri Alexa: "For ProRes recording and HD-SDI outputs a 2880 x 1620 pixel area is read from the sensor. This is then debayered and downscaled in camera by a factor of 1.5, leading to a beautiful 1920 x 1080 image."

-

You loose resolution by cropping. It may not be a great deal, but that is precisely why Canon, for example, is using a 4K sensor in the C300 to output only 1920x1080. Remember, with the BM bayer sensor, there are only 1216 green pixels in a 2432 line. In addition, one of the main reasons to try Super 16 lenses on the BMC is the lack of fast, ultrawide (~6mm, 8mm) lenses that match the small non-standard format. When you crop, the field of view narrows, so the advantage lessens. I'm not saying it's not worth trying. Just that there is no free lunch. Cheers.

-

Yes, but at a price. With bayer sensors, it's better to downres, to 2K rather than crop. But I've done crops, and they look awfully good. Cheers.

-

Let me know what lenses you have. Cheers. info at admitonepictures dot com

-

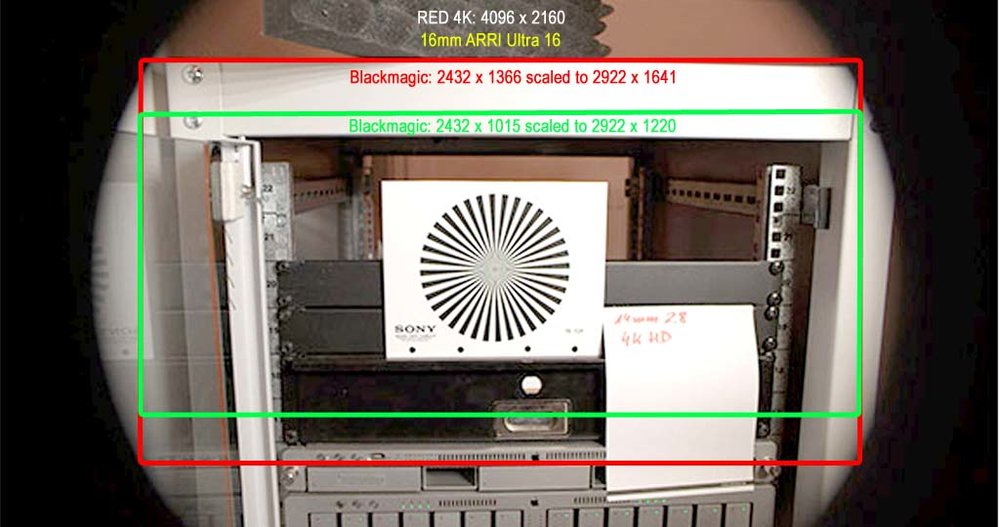

Oops. I failed to consider pixel size in the above. The RED sensor = 5120 pixels / 27.7mm = 5.41 micrometer pixel size. The BM sensor = 2432 pixels / 15.81mm = 6.50 micrometer pixel size. To extrapolate the RED image to the BM sensor, the larger BM pixels must be scaled up by a factor of 6.5 / 5.41 = 120%: That means 2432 BM pixels take up the same space as 2992 RED pixels. Cheers.

-

Thanks for the shot. I've done a quick and dirty composite over the 3K (3072) image of the BM 2.5K (2432) sensor. Looks pretty good to me! Did you do any other lenses? Cheers.

-

While the Blackmagic sensor has an ~18mm imaging circle, the lens need not cover the entire circle, only the area of interest. If you are shooting for 2.40, for example, the image circle needed becomes ~17mm. Subtract from that gradual falloff and the fact that the entire image will likely never actually be seen, and some of the lenses are quite close. Cheers.

-

While it's clearly not ideal from a resolution standpoint, a 1920 crop of the 2432 sensor is 12.5mm, which is just a tad less than the 12.52mm of Super 16. You could decide on a crop on a lens by lens or even scene by scene basis depending upon the degree of tolerable falloff. A bit of a kludge? Perhaps, but the upside of using Ultra 16 Primes could easily outweigh the downside. Cheers.