Search the Community

Showing results for tags 'virtual production'.

-

Finally the Cyberspace VR surfing that was promised to us in the 90's and 2000's is at our fingertips. Worlds and avatars beyond euclidean geometry and imagination are reality at last. Meet people from across the world in teasing 3-D, exploring vast worlds of fantasy together, or dance your tail of to your favourite music with a colourful crowd. Finding people online is no longer shackled to text or voice, but now everything is possible all the while experiencing 'real' proximity. But digital space can also be a gateway to meeting people in real life, not as distant strangers but as close friends. Making for highly emotional and gorgeous experiences that make lasting core memories. Be them saved in the digital domain, on Polaroids or on the retina. I wanted to mix all these wonderful mediums of expression together and show what wonderful things can come from VR and beyond.

- 2 replies

-

- vr

- virtual production

- (and 7 more)

-

Kindly ignore if the topic is irrelevant. As a student of cinema and cinematography,what I have noticed the advancement in tech is more of a concern in cinematography rather than the aesthetics or in some case, story. Films like Nope or TV series shot in virtual production,or something like Way of Water . Hence I wanted to ask in what way we are going to see evolution of Cinematography in next decade or two ,and should we still study films by Freddie Young,Vittorio Storaro ,Frederick Elms, Chris Doyle,Bruno Delbonnel,Claire Mathon etc. where the aesthetics is still kept under consideration? Also the people who are starting out in cinematography ,would need to add some more skill set under the belt ? Thanks in advance. Saikat C Cinematographer | Colorist

- 32 replies

-

- cinematograhy

- technology

-

(and 1 more)

Tagged with:

-

I'm staff at the Virtual Reality rave community PHC. We use VRChat as our platform. My jobs concern media/photo/video/tech and stream team. Month events deep into the night, lots of fun when things go alright, not so much when there are technical issues. Made a community showcase for Furality Aqua that took place some months ago. I wanted to show people having a good time together and enjoying a common passion: music and being together. All of these shots where made in a virtual space with a VR headset and controllers. My setup is wireless so I can walk around the entire living room, but I also had a VRChat mod that allowed me to make a rail setup for the camera(now no longer possible). One big problem is performance especially with +40 players in my instance, FPS tanks between 15-25fps easily. The video is a compilation for several events, which are run monthly or bi-weekly. Tell me what you think ^^

- 1 reply

-

- vr

- virtual reality

-

(and 4 more)

Tagged with:

-

Hi everyone, who's interested in Virtual Production. Setting up a VP studio can be pretty time consuming, especially when choosing a tracking system. There are various options to choose from, so we shot this quick demo: how to use Antilatency with Unity. You can track cameras, objects and even body parts. In this case, we have used a tracking area (attached to the truss system), used one tracker for the camera, and two trackers for each leg. All tracking data was transmitted to Unity via proprietary radio protocol, and was used for real-time rendering. We didn't use post-processing or cleanup — only real-time data. Let us know what you think. We'd love to hear about your experience with Virtual Production, and Antilatency if you’ve had the chance to work with it before. We’re also open to suggestions on what demos we should do next.

-

- virtual production

- unity

-

(and 2 more)

Tagged with:

-

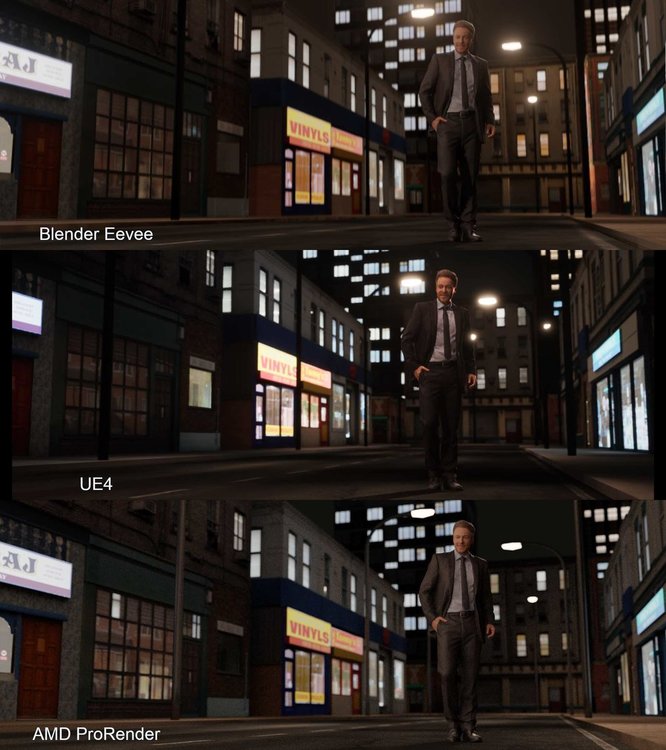

Since the industry shutdown all of my live action work, I decided to learn a new skill set and dived deep into the world of virtual production. I said it in college and still believe it today that narrative film will increasingly move towards virtual production. Big budget films already utilize a ton of CG environments and the Mandalorian showed us how incredible real time rendering on an LED wall can be. As a primer, I found this doc from Epic games gives a fantastic intro to virtual production: https://cdn2.unrealengine.com/Unreal+Engine%2Fvpfieldguide%2FVP-Field-Guide-V1.2.02-5d28ccec9909ff626e42c619bcbe8ed2bf83138d.pdf Matt Workman has been documenting his journey with indie virtual production on YouTube and it's becoming clear how easy it is for even the smallest of budgets to utilize real time rendering. I wouldn't be surprised to see sound stages or studios opening up that offer LED volumes and pre-made virtual sets at an affordable price for even micro budget features. Here's what I did so far: Attached are renders from three engines: Blender's Eevee, Unreal Engine 4, and AMD ProRender. (please excuse the heavy JPG compression, shoot me a DM for an uncompressed version) I made the building meshes from scratch using textures from OpenGameArt.org and following Ian Hubert's lazy building tutorials. The man is a 3D photoscan from Render People. All of these assets were free. Lighting wise, I was more focused on getting a realistic image over a stylized one. Personally, the scene is too bright and the emission materials on the buildings need more nuance. Blender's Eevee: Honestly, I'm beyond impressed with Eevee. It's incredibly fast. For those who don't know, Eevee is Blender's real time render engine. It's what Blender uses for rendering the viewport, but it's also designed to be a rendering engine in its own right. Most of Eevee's shaders and lights seamlessly transfer over to Blender's Cycles (their PBR engine). UE4: After building and texturing the meshes in Blender, I imported them into UE4 via .fbx. There was a bit of a learning curve and manual adjustments for the materials, but ultimately I was able to rebuild the scene. The only hitch were the street lamps. In Blender, I replicated the street lamps using an array modifier which duplicated the meshes and lights. The array modifier doesn't carry over the lights into UE4 via the .fbx, so I had to import a single street lamp and build a blueprint in UE4 that combined the mesh and light. In the attached image, the street lamps aren't in the same place in UE4 because I was approximating. My next step is to find a streamlined way to import/export meshes, materials, camera, and lights between Blender and UE4. I believe some python is in order! As expected, UE4 looks great! AMD ProRender: Blender's PBR engine, Cycles, is great. However, it only works with CUDA and OpenCL. I currently only have a late 2019 MacBook Pro 16". It's GPU is the AMD Radeon Pro 5500M 8GB. Newer Apple computers only use the Metal API, which Blender currently has no support for. Luckily, AMD has their own render engine called ProRender. Needless today, the results are great and incredibly accurate. This engine isn't a real time. Render time for the AMD shot was 9 minutes. This render is definitely too bright and needs minutiae everywhere. My final thoughts: Even though this is a group for Unreal, I'm astonished by Eevee, particularly how incredibly fast it is for running only on my CPU. (Again, Blender has no support for Apple Metal, so it defaults rendering to the CPU on my laptop) The next iteration of Blender will be utilizing OpenXR. According to the Blender Foundation, they'll slowly be integrating VR functionality into Blender and version 2.83 will allow for viewing scenes in VR. With that in mind, I'm definitely going to experiment with virtual production inside Blender. As for Metal support, I believe Blender will be moving from OpenCL/GL to Vulkan in the near future. From what I've found, it's easy to translate from Vulkan to Metal. (This part is a bit above my head as a DP, so I'll just have to wait or use a Windows machine) Does anyone have any useful guides that have a streamlined process for moving things between Blender/UE4? I'm from live action and love how easy it is to bring footage from Premiere/FCPX to DaVinci and back via XML. Is there something similar or is .fbx the only way?

- 23 replies

-

- 2

-

-

- virtual production

- unreal engine

-

(and 3 more)

Tagged with: