Thomas Worth

Basic Member-

Posts

372 -

Joined

-

Last visited

Profile Information

-

Occupation

Director

-

Location

Los Angeles

Contact Methods

-

Website URL

http://rarevision.com

Recent Profile Visitors

4,924 profile views

-

Phil's right. The problem is most likely the software you're using to view the clips on your computer. I see this problem all the time when footage from Canon DSLRs is viewed in VLC. Since VLC assumes the video's usable range is 16-235, it ignores both full range shadow and highlight detail. The result is crushed blacks and clipped highlights. QuickTime Player on the Mac is able to display Canon footage properly. Also, NLEs like Premiere display it properly as well. If you want to ensure this will never, ever be a problem for anyone else working with this footage, the thing to do is transcode the footage using 5DtoRGB. It will remap the 8 bit full range video to 10 bit broadcast range and compress to ProRes. This will save all of the shadow and highlight detail. Anything that reads ProRes-compressed MOVs will then display it correctly.

-

Chroma Keying on a DSLR?

Thomas Worth replied to Richard Plucker's topic in Visual Effects Cinematography

5DtoRGB doesn't clip shadows/highlights if you choose Full Range. It will clip if you choose Broadcast Range with full range material (like from a Canon DSLR). That's because it assumes the maximum range is within 64-940 (16-235 in 8 bit) and scales accordingly.- 15 replies

-

- Chroma key

- greenscreen

-

(and 1 more)

Tagged with:

-

I've got a 1200w HMI par with a magnetic ballast and I want to run it off a cheap Coleman 1800w generator. I've tried it and it works, but there's a constant flicker. What are my options? Is there anything I can do to remedy this? I was running the ballast at 60 Hz. I didn't try switching to 50 Hz mode, if that matters. Is there a device I can put inline between the generator and the ballast to filter the power?

-

Illya Friedman at Hot Rod Cameras recently lent a pre-release Sony FS700 to several members of this forum. Fortunately, I was one of them. Here's what I was able to come up with: Some thoughts about the camera: The FS700 is fairly small when all of the accessories are removed. The on-camera LCD is quite viewable in direct sunlight (I had the brightness turned all the way up). Illya had one of his PL mounts installed, so we needed to keep the base/rods connected since the mount is supported by the rods. It was still light enough to fit on a cheap steadicam even with the PL adapter, rods and Zeiss CP.2 100mm attached (max 10 lbs for this particular arm/spring combo). I shot at the lowest ISO, 400. I used the "CINE 1" gamma, if I recall correctly. It wasn't the flattest, but considering the max AVC bitrate is supposedly 28 mbit/sec I didn't want the image too flat. I think the bitrate at 23.976fps came in at around 22-23 mbit/sec. Not too much, but I feel I was able to make it work. I transcoded all the footage with 5DtoRGB before cutting in Premiere CS6. There's some roto/tracking in the video for clean-up. I primarily used AE, but had to use Mocha (AE bundled version) for a shot or two. VFX shots were transcoded to DPX with 5DtoRGB. The point tracker in AE was all over the place with some of the stuff because of the noise/compression, but for the most part everything worked out fine. I was expecting a mess in color correction, but it actually wasn't too bad. As you can see from the video, the grade is fairly heavy. On a few shots, I tried adding a window to part of the background to bring it up but had to remove it because noise was a problem. You could clearly see where the window was because of the increased noise. Oh well, I was warned this wasn't the final version of the camera and to expect extra noise. I didn't think the noise was any worse than any other AVC camera at ISO 400, to be honest. And of course, 240 fps is nice. It may be possible to do 300 fps when shooting 29.97 (then conforming to 23.976), but I didn't try it. The camera actually writes a 23.976 file when shooting high speed. I assume it buffers 10 seconds internally and then compresses/writes after as 23.976 AVC to the card. It's a little laggy when it writes (bringing the shoot to a halt), but hey, it's 240 fps. :) Overall, I'm damn impressed. If anyone has the opportunity to shoot with one, do it. And then go buy one.

-

If shooting black and white, the best thing to do is extract the unaffected Y (luma) channel from the H.264 file. You can do this with 5DtoRGB. I played around with it, and during my tests discovered that the Technicolor style boosts black up to 16 from where it should be (0). This discards precision. Interestingly, it does not do this to the highlights (they're still at 255). I don't think this is a good idea with 8 bit data, especially with such a flat curve. We need every bit of data we can get.

-

ProRes from DPX... sharper than DPX?

Thomas Worth replied to Steve Zimmerman's topic in Post Production

We need some more information. What type of machine was used to do the scans? Not sending DPX files initially sounds very suspicious. This sounds like a telecine job to HDCAM / SR which was then captured on a Mac with a Kona card to ProRes. It could be that the DPX files were created from the ProRes material or from the HD tape using a different system. Without knowing exactly what type of equipment was used, we won't know for sure and won't be able to predict how much of a pain it would have been for the facility to redo the work. They should. If they don't, someone made a mistake. Why? Was a grade part of the deal? Was the deal for DPX original scans plus a color-corrected ProRes output? As Phil pointed out, anyone who knows what they're doing will work from uncompressed files. What if you decided later on to grade on a system that didn't support ProRes? -

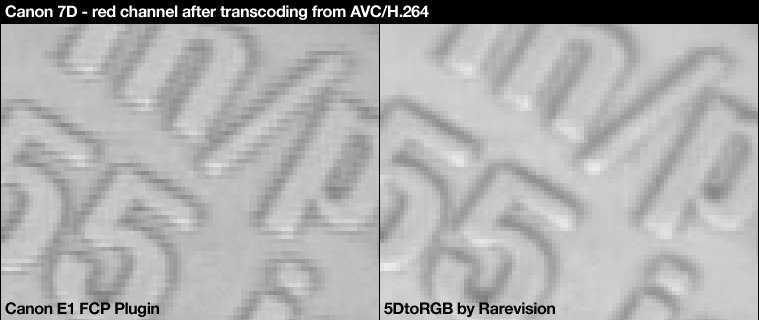

Phil, it seems there's a slight variance in the decoding matrix between the two programs. The lips seem to have a slightly orange tint to them in the shot on the right. Is it possible to send me that take? I'd like to run some tests. FYI, Phil is using a development version of 5DtoRGB for Windows that does the image processing on the GPU, and the new code isn't fully tested. Results may improve/change later.

-

For most intents and purposes, 24fps and 25fps (progressive) are completely interchangeable. I've shot in both formats for delivery in the other, and in all cases the result was fine. In fact, the only real difference between the two is audio. 24fps can be remapped to 25fps with a 1/1 relationship (which is standard practice), and after a 4% audio pitch/speed correction, the difference between the two is imperceptible. The timing change takes place all the way at the end of the post process, after the final sound mix. So, you'd perform all post at 23.98 or 24.00 (including final mix), and then do the pitch correction. The only issue you may encounter is the timing of certain types of music. The pitch correction doesn't affect the sound quality, but if people are used to listening to a certain song at a certain tempo (like if they've heard the song a million times), the speed difference may be perceptible. The solution to this is to perform the sound mix with a sped up (or slowed down) version of the song, so when the pitch/speed correction is applied, the song is returned to its original tempo.

-

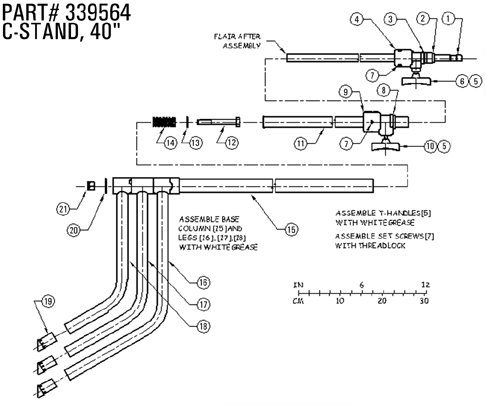

The problem is the bolt head is inside the main body of the C-stand. If I turn the bottom nut, the bolt itself turns because there is no star / lock washer, etc, at least not on this stand. There's no way to hold the bolt head unless I have something long enough to all the way down the length of the main body/tube (after the telescoping stages are removed). I attached a diagram that shows how it's put together. This is a Matthews stand, but all Century-style stands share this same design as far as I know.

-

I've got a couple stands that could use some tightening. However, the bolt that holds the base together needs to be held in place from inside so it won't turn when I try to turn the nut on the bottom. How do I hold the head of the bolt? It seems that I'd need a very long socket extension. Any ideas?

-

I know this has already been asked a few times, but I am interested specifically in the diffusion properties of each type of diffusion material: 1/4 grid cloth, poly silk, and sail. I'm mainly interested in diffusing direct sunlight, using a large butterfly / overhead. I'd like the softest light I can possibly get while losing only about a stop. The fabrics can all be found in 1.0 stop versions, so I'm guessing the real difference is how much diffusion / ambient light they produce. Any info on this would be much appreciated!

-

Here are a couple blog posts: http://16x9cinema.com/blog/2010/8/30/rarevisions-5dtorgb-a-better-way-to-convert-canon-5d-mark-ii.html http://motionlifemediablog.wordpress.com/2010/09/02/5dtorgb-color-tests/ And the Windows GUI version will come shortly after I get the Mac version stable!

-

As a follow up to my last post about T2i/7D editing, I wanted to let everyone know that a beta of 5DtoRGB is now available for Mac OS X (64 bit). I've also included dMatrix, which is free app that saves custom decoding matrices (which can be alternates to BT.601 or BT.709). It's still in beta, so there may be bugs. Don't use it in a production environment. Download it here: http://rarevision.com/5dtorgb/

-

Apple's built-in H.264 decoder is a general purpose decoder. That means it is designed to do many different things, including playing back 1080p trailers from Apple's website in realtime. Unfortunately, this also means compromises are made on quality for performance reasons. The image on the left is a reasonable attempt by Apple to reconstruct chroma from a 4:2:0 source (i.e. video compressed with H.264). However, it's not as good as it could be because decoding an image using extremely slow double precision math could cause stuttering during playback on some slower machines. There simply isn't enough CPU power to do all these calculations and afford realtime playback, which is one of the main things the Apple decoder was designed to do. Since most programs use the built-in Apple decoder (including FCP, Canon's plugin, MPEG Streamclip, etc.), they all suffer from this problem and there's nothing you can do about it. 5DtoRGB doesn't care about realtime playback, so it can use the slowest, highest quality processing possible to deliver the cleanest image. It doesn't use QuickTime at all, and therefore doesn't use Apple's H.264 decoder.

-

Let me elaborate. This tool is in development, and therefore is still missing features. That said, if anyone would like to test it, please contact me offline and we'll talk. I developed the tool primarily for VFX work, but since it effectively gives you the highest possible quality image out of the camera, it's useful for any purpose that requires high image quality. I have read similar claims. However, we don't really know what exactly Canon are doing in the camera. Some down/upscaling tests would probably give us a general idea of how much real resolution there is. I can say that the camera generates a full raster 1920x1088 (yes, that's "1088," not "1080") image for Y, and 960x544 for Cr and Cb (remember, this is YUV 4:2:0). 5DtoRGB chroma interpolates Cr and Cb to 1920x1088 so all three channels have matching dimensions.