-

Posts

106 -

Joined

-

Last visited

Everything posted by Stephen Baldassarre

-

Good Old Fashion Light Meter

Stephen Baldassarre replied to Stephen Baldassarre's topic in Lighting for Film & Video

I figure out the camera settings I want and light to that. I'd be hard pressed to get satisfying results shooting in anything less than about 60 Lux. -

I use panel lights a lot because I'm generally either creating very flat lighting with no obvious shadows and then adding a key to create a single, distinct shadow, or otherwise using the panels to fill in shadows when utilizing available light. Those Amarans with soft boxes could achieve a similar result (then adding one without a soft box for the key). I haven't worked with them personally though. I actually don't have any LED spots, as I like having real tungsten as my key for better color rendering.

-

Check out some of the old Kodak film brochures. Many of them have screen shots from actual theatrical films and what the relative exposure is for different parts of the frame. That'll help you develop a baseline for looking at other images. I seem to remember an engineer from Kodak sending me a book with a handful of images and relative exposures notated in them. I think I lost it years ago though. Kodak also has a great deal of material on YouTube. Check out the following video, around 11:08

- 4 replies

-

- 1

-

-

- lighting ratios

- lut

-

(and 1 more)

Tagged with:

-

That would be a decent start, though if I were a student on an extremely limited budget (as I was once), I would get a set of obsolete tungsten lights that theaters, clubs, churches etc. are throwing away. I STILL bring out my old ETC PAR lights and DeSisti 1K Fresnels. I'll often set up an arc of fill lights with panel lights, like FotodioX Pro LED P60 to bring my exposure up to maybe -1 stop, no shadows, then add in a 575W PAR with oblong lens or a 1K Fresnel for the key to bring exposure up to full. I have some relatively tall but inexpensive stands like Impact Pro 10'8" stands, among others, but I also have some nice hefty 14' C-Stands. If you do wind up getting those Amarans, I would strongly suggest soft boxes to go with them.

-

Good Old Fashion Light Meter

Stephen Baldassarre replied to Stephen Baldassarre's topic in Lighting for Film & Video

My first light meter was (and still is) an old GE I bought from a clerk at a local camera store for $5. I mean, it was his personal first light meter and sold it to me when I was in high school. It still works and is fairly accurate, but just measures footcandles, which you enter into a rotary computer on the case. I have an old, lower-end Sekonic that does similarly, but I like the Gossen better. Now here's another consideration: I've heard a few people say that older meters don't play well with LED lighting. I still work primarily with tungsten lighting but I do a some hybrid stuff as well and never noticed a difference. I tried looking up the spectral response of silicon photocells but it gets kind of murky. Some types are noticeably more efficient in the blue end of the spectrum, others are fairly flat across the spectrum. I suppose I could grab an RGB light and test to see which flavor my meter has. -

It depends on the projector and the shutter angle. DLPs can introduce colored flicker, LCDs generally won't. The narrower the shutter angle, the worse the flicker will be. LASER and LED are just light sources, as opposed to the various other types of lamps projectors commonly use. The lamps are not a source of flicker, just the imaging technology, as many DLPs use spinning color wheels to sequentially isolate red, green and blue. If I could choose my projector, I'd probably prefer a 3-LCD projector with halogen lamp, as that would *generally* render best on film. I'm assuming you're using tungsten-balanced lighting as well. LED and LASER are likely going to be 5600K or higher, so they'll look blue on-cam, relative to tungsten lighting.

- 1 reply

-

- 1

-

-

Good Old Fashion Light Meter

Stephen Baldassarre replied to Stephen Baldassarre's topic in Lighting for Film & Video

That actually doesn't bother me. I put the meter in front of my face and point forward, click, then look at the meter. Since it retains the reading for a few seconds, I have time to turn the dial afterwards. Sometimes when I'm lighting for stage, I'll turn it sideways and point it towards the audience while I walk across the stage, holding the button to see the needle move, VERY HANDY! I've been suspecting I might have to bite the bullet on this one and "modernize". I feel like a simple software update could make a newer meter do what I want though. -

Augmenting In-Shot Practicals

Stephen Baldassarre replied to Olivier Metzler's topic in Lighting for Film & Video

I generally add an arc of comparatively low-power lights to add fill or possibly bounce a spot off of a large card or white wall to fill the shadows a little. -

I have several light meters but have an affinity for the Gossen Luna Pro SBC. I currently have two of them but they won't last forever; I broke one a year ago by dropping it on a concrete floor. They're mechanical devices, the domes on some units I see online are obviously yellowed and mine will probably do the same over time. The thing about this meter: you can take a reading, turn the dial till the needle reaches "0", then every combo of F-stop and shutter speed on the dial will yield a good exposure. What makes this my favorite meter is I can have the dial set and click the button to see "+1.5 stops", or take reflected light readings to see how highlights/shadows will render. I can go on-scene and set the meter for say, 100 ISO, 1/60, F4 and the meter will simply show how many stops over/under I am in a given area. Now I know you can SORT of do that with modern meters but they all seem to do it in a less intuitive way. Like, instead of saying "-1" when I want to light for F4, it'll say F2.8. Also, I don't like navigating menues or click through options to get it to behave the way I like (I knew a DP that just set his meter to show Lux and didn't bother with anything else). The dials just stay where I put them. It's a minor nit pick, I get it, but I feel I can work more comfortably with a meter that's older than I am than the newer ones I've used. Do any of you know of a MODERN meter that shows relative exposure in stops?

-

This was from a 16mm shoot, many many years ago. I think the stock was 7205, 24fps, T2, no filters or artificial lighting. The first movie I directed had a fireplace scene, indoors. I shot it on 200 ISO at F2 as well, though it was a smaller fire and the subject was closer to it. I'm afraid I don't have a screen shot at the moment. You can put silver reflectors nearby to add some fill.

-

Getting colored lighting right

Stephen Baldassarre replied to Jon Bel's topic in Lighting for Film & Video

Just a quick note on the poor rendition of red on film. Blue is the first layer, followed by green, then red. Not only is red slightly farther away from the focal point of the lens, the light has scattered through the other emulsion & filter layers. That's one of the reasons photochemical composite shots were traditionally done with blue screen. Skin tone also contains very little blue but that's beyond this topic. I also suspect that red is more susceptible to light reflecting off of the antihalation coating. It's dark, but not 100% absorptive, and any light bouncing off of said coating has to pass through the color filters a second time to get to their respective emulsion layers. The red layer essentially has direct line of sight with the back of the film. This is a good look into the structure of film. http://www2.optics.rochester.edu/workgroups/cml/opt307/spr10/shu-wei/ Mr. Mullen, those screen shots look fantastic! They give the impression of red, but pure red doesn't exactly exist here. Even deep red gels still allow a much broader spectrum than red LEDs. You also have splashes of full color, which gives a reference point for the eye to maintain its own white balance. It's a stark contrast from many modern movies where entire scenes are digitally tinted orange, blue etc. Newer movies feel very "alien" by comparison, mostly because a lot of graders seem to screw with the color without reason. I say this immediately after throwing out a LUT given to me alongside some raw video, shot with fairly heavily saturated red & magenta colored lights, in favor of a simple log conversion with a slight manual tweak done on my end. The guy shot some amazing stuff but his grade made it look just plain weird. -

Specialty Cardboard for Flags?

Stephen Baldassarre replied to Stephen Baldassarre's topic in Lighting for Film & Video

Sure thing. Like I said, not exactly what I wanted but close enough for this project. https://www.hobbylobby.com/Art-Supplies/Painting-Canvas-Art-Surfaces/Art-Paper-Boards/Blacktop-Black-Core-Matboard---32"-x-40"/p/107562 -

Specialty Cardboard for Flags?

Stephen Baldassarre replied to Stephen Baldassarre's topic in Lighting for Film & Video

I'm familiar with the blackened foil. It IS good stuff, though a bit dangerous to handle. I wound up going back to Hobby Lobby again and this time, found something close enough for my purposes. Thanks! -

LED Lighting for 16mm?

Stephen Baldassarre replied to Lilly Lewin's topic in Lighting for Film & Video

Don't be afraid to look on the used market for some 1K Fresnels. I'm sure you can get them super cheap or free. I'd offer you some of mine but the shipping cost would probably be more than the fixtures are worth. :D -

When shooting indoors, I try to always shoot around F4, unless I'm going for a special look. I usually don't have the budget to do much reinforcement of lighting outdoors but with a good ND filter, can generally get between F4 and F8. I seem to remember reading that Josh Becker generally lights everything to F5.6. It's not as hard as it sounds unless you're doing available light shooting.

-

LED Lighting for 16mm?

Stephen Baldassarre replied to Lilly Lewin's topic in Lighting for Film & Video

The main problem with most LED fixtures, even fairly high-dollar video lights, is there's very little energy between deep blue and green, virtually no cyan and little in the deep red range. What I usually do is use LEDs for my fill lights and then add in a tungsten key, works pretty well. -

Hello, it's been a while since I've been here but I have a very specific material need I can't find anywhere. When I worked in television, years ago, we had large sheets of what's basically like VERY heavy, stiff, construction paper. This stuff would stay flat but if you intentionally bent it, it would stay in place and held up to high temps from being clipped to barn doors on 2K & 5K Fresnels. If you tore it, you can see that it's black through and through, not just on the surface like normal construction paper. I really need some of this stuff and none of the lighting/photography places have any clue about it, nor do the craft stores. Does anybody know where I can get something like that? I have to replace a projection screen in an auditorium. Ambient spill light is bad enough on the current screen but they want the new screen to be hung lower. I measured a full-stop more ambient light where the new bottom of the screen is going and need to extend the barn doors substantially in order to get the hard-cut line needed to light people on the stage without washing out the screen. I made some extensions, turning 6" barn doors into 16", out of 30AWG sheet metal and high-temp paint. That worked perfectly for the ECT barn doors, as I could just tighten the bolts to hold them in place. The DeSisti barn doors won't hold the weight and regular construction paper just plain DID NOT WORK. :D I can't use regular flags and C-stand parts due to the construction of the ceiling. Really, that stuff we used in television would be perfect. Any advice would be much appreciated, thank you!

-

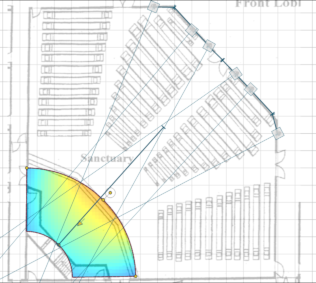

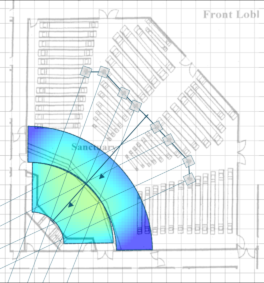

This is sort of off-topic to cinema but related. I was hired to set up a church with a video streaming system. Since they were in a hurry to get up and running, I set up temporary lighting on their balcony going purely by instinct (and a light meter of course). I have the entire stage lit within 1/2 stop using six Fresnels and it looks good on camera. I want to be a bit more "scientific" for the final version, where the lighting will be mounted to the ceiling and there will be eight fixtures total. I did an experiment where I replicated my temp lighting in EASE Focus, which is speaker coverage software. I chose a speaker model that radiated (in the high frequencies) similarly to a Fesnel and adjusted the virtual test frequency till I found the one that most closely matches the lights' beams. See attached. The results are consistent with my practical observations with measurements taken at eye-level. The map is set to cover a 1-stop range where bright red (none showing) is the equivalent of +1/2-stop, deep blue (none showing) is -1/2-stop with green/yellow being on target. The 2nd pic is a quickie experiment for a possible lighting scenario; much better than the current temp lighting with everything within 1/4-stop (but probably can't fully trust it). Sound does not behave the same way as light, as similar as it may be in some respects. Is there software out there that is DESIGNED for lighting? I feel like that would be a lot easier to do than speaker placement software for certain. Choose a fixture type, beam-width, power etc. place it in the room, adjust the angles and barn doors, done. BTW, the grid is 5' spacing. It's a pretty big stage. The 2nd "zone" in the 2nd pic accounts for the difference in floor elevation as they sometimes have people talk in front of the stage. Thank you!

-

Help with practical effect

Stephen Baldassarre replied to Osman Arslan's topic in Visual Effects Cinematography

I look forward to seeing some shots! Music videos have always been my favorite projects to shoot because you can get away with just about anything you want. If you can pull off some effect, great! If it doesn't work, it's part of the art form, so no big deal. It's like when I shot a music vid and luma-keyed in some ethereal junk in the background just because it was a plain black background otherwise. The luma key ate into some of the foreground but that just made it cooler!- 10 replies

-

- practical effect

- green screen

- (and 3 more)

-

Easiest method for Mirror-duplication shots

Stephen Baldassarre replied to Max Field's topic in Visual Effects Cinematography

That looks cool! I love practical effects. One of my favorites for a music video was a shot that waved and distorted. Nobody believed I didn't use some computer plugin (and this was in the 90s), I just shot downward through a Pyrex pan with water in it.